Augmented Reality Touch Table

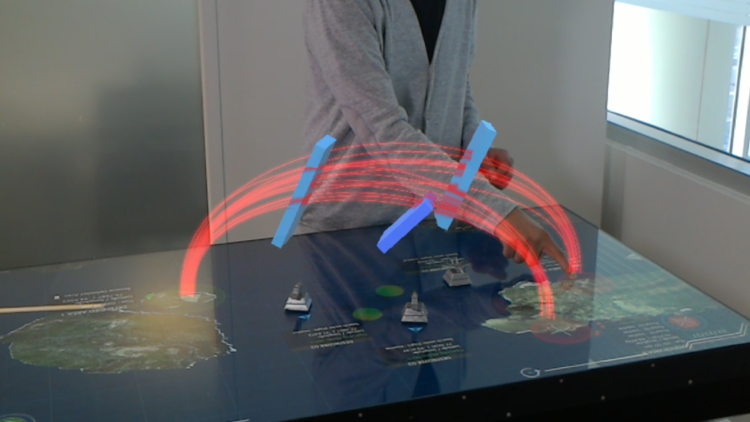

A touch table with augmented reality integration, designed as a mission planning system for the US Navy and Johns Hopkins Applied Physics Laboratory.

I presented the product to members of private industry and government at the Tactical Advancements for the Next Generation (TANG) 2017 event in Norfolk, VA.

Two Levels of Information

The touch table shows data in two layers – one visible on the table, and and another seen only by the Microsoft Hololens wearer.

Operation Planning

Users can design missions by positioning 3D printed figurines on the table; the Hololens wearer can see “top secret” projectile paths and additional asset capabilities, corresponding to each model.

Handpainted Components

I designed the 3D figurines to represent assets of the US Navy, working with a 3D printing shop to build them in ABS plastic. I finished the components with bondo, XTC-3D, and acrylic paints to give a metallic sheen.

Asset Identification

QR codes attached to the bottom of the figurines allow cameras inside of the Multitaction Table to distinguish figures from one another.

A Successful Exhibit

I designed the component layout within the table structure, and physically assembled both the table and audio-visual setup inside. I led assembly of the table at TANG 2017, and presented it to the clients and visitors to the show.

Constructive Feedback

I returned from the show with UI and UX suggestions for the Unity developers, based both on personal experience and user feedback collected during my presentations at TANG. Fixes were adapted later, before officially releasing the product to the client; I trained them in the assembly, use, and disassembly of the product.